| Key Facts | Details |

|---|---|

| Article type | 10 requirements gathering questions with explanation, context, and practical examples |

| Primary audience | Practising Business Analysts, BA beginners, professionals preparing for CBAP or CCBA |

| When to use these questions | Requirements workshops, stakeholder interviews, elicitation sessions, project kick-offs |

| What these questions improve | Requirement clarity, stakeholder alignment, edge case coverage, assumption management |

| BABOK alignment | Maps to Elicitation and Collaboration knowledge area — BABOK v3 |

| Related techniques | Stakeholder interviews, requirements workshops, process walkthroughs, prototyping |

| Last updated | March 2026 |

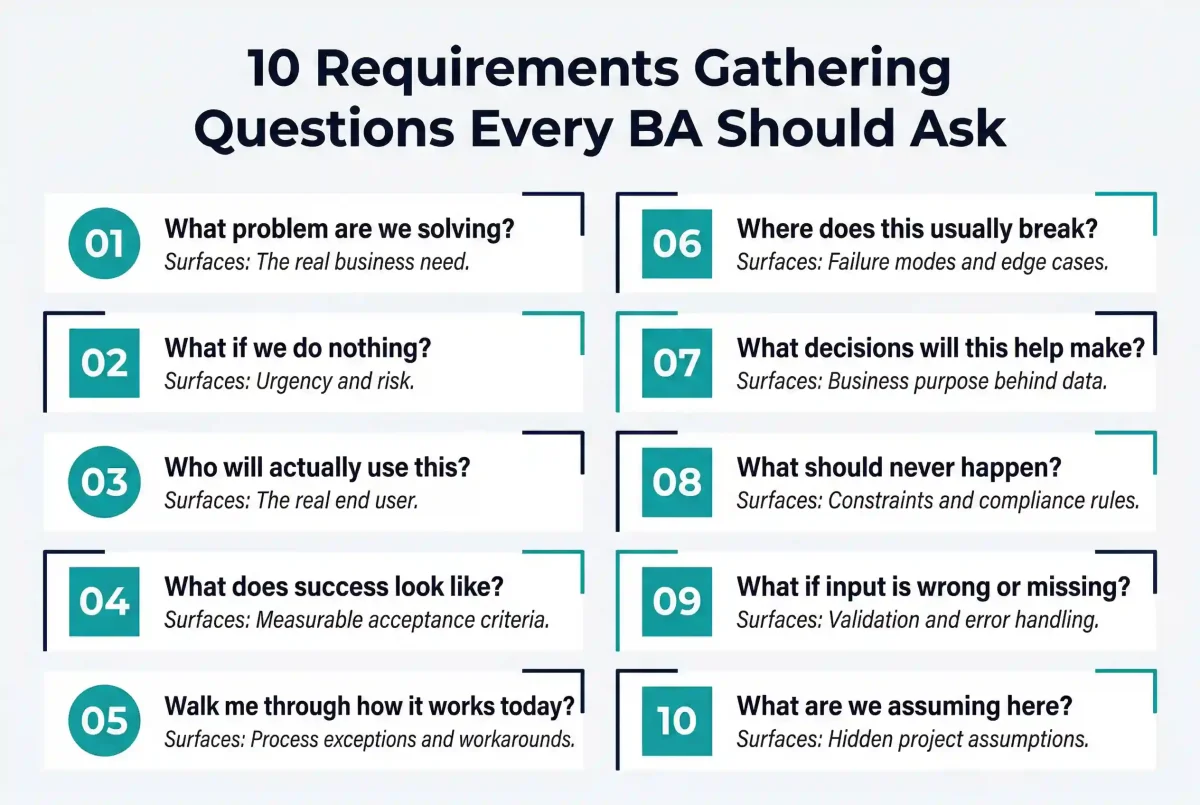

The requirements gathering questions for business analysts that actually make a difference are rarely the ones that appear on a standard elicitation checklist. When you look back at most requirement failures in projects, they almost never happen because someone did not write enough. They happen because something important was not asked at the right time.

In real projects, requirements do not fail on documentation quality. They fail on clarity. And clarity almost always comes from asking better questions — not more questions. Just the right ones.

Over time, experienced Business Analysts develop a set of questions they rely on across every engagement, every methodology, and every stakeholder type. Not as a checklist to run through mechanically, but as a thinking discipline that prevents the blind spots responsible for most requirement rework.

This guide covers the 10 questions that consistently make the most difference — what each one surfaces, when to ask it, and what to do with the answer.

1. What Problem Are We Actually Trying to Solve?

What this question surfaces: the real business need behind the stated solution request.

This sounds obvious, but it is one of the most consistently skipped questions in requirements gathering. Most discussions start with a solution already formed in the stakeholder’s mind: ‘We need a new dashboard’, ‘We need automation for this process’, ‘We need a new feature in the portal.’ The solution arrives before the problem has been properly defined.

Without pausing to ask this question, a Business Analyst risks spending weeks designing a solution to the wrong problem. The stated request is often a symptom, not the root cause. The actual business problem might be a process gap that a simpler change could fix. It might be a data quality issue that no amount of new functionality will resolve. It might be a one-time situation that does not need a permanent solution at all.

How to use the answer: Document the problem statement separately from the solution request and validate it with the stakeholder before proceeding. If the stakeholder cannot articulate the problem clearly without referring to the solution, probe further. ‘If we could not build this, what business impact would that create?’ is a useful follow-up that forces the conversation back to business outcomes.

In BABOK v3, this question maps directly to the ‘Needs’ concept in the BACCM framework — every business analysis engagement begins with an identified need, not a proposed solution. Building this discipline early in requirements gathering prevents the most costly type of rework: building something correctly that nobody actually needed.

- Related: The BACCM framework provides a structured way to think about business needs before requirements are defined. See our guide to the BACCM model

2. What Happens Today If We Do Nothing?

What this question surfaces: the real urgency and business risk behind the request.

Not every problem has equal urgency. Some situations are genuinely painful but manageable — the team has developed workarounds, the impact is contained, and the risk of leaving it unsolved is low. Others appear routine but carry escalating business risk — a compliance gap that will trigger regulatory consequences, a process inefficiency that compounds over time, or a customer experience issue eroding retention in ways that are not immediately visible.

By asking what happens if nothing is done, you force the stakeholder to articulate the cost of inaction rather than the appeal of the solution. This reframes the conversation from ‘what do we want’ to ‘what do we need’ — a critical distinction when competing priorities and constrained delivery capacity mean that not everything can be built immediately.

Why this matters for requirement quality: the answer directly influences how requirements are prioritised. A stakeholder who answers ‘nothing much, it would just be nice to have’ is telling you this is a low-priority enhancement. A stakeholder who answers ‘we will miss our compliance deadline and face regulatory penalties’ is telling you this is a critical requirement that must be treated differently in scope, priority, and risk management.

This question also prevents over-engineering. When the impact of inaction is genuinely low, the right solution is often simpler than the original request suggests. Knowing this upfront shapes both the requirements and the solution design in ways that save significant delivery effort.

3. Who Is Actually Going to Use This?

What this question surfaces: the real end user, not the stakeholder’s representation of them.

There is a fundamental distinction in requirements gathering between stakeholders who commission a solution and users who will operate it day to day. These are often different people with different technical comfort levels, different workflows, and different definitions of what makes a solution useful.

Stakeholders frequently speak on behalf of users in requirements sessions. They describe what they believe users need, filtered through their own management perspective. A system that works perfectly for a department head who checks a dashboard twice a week may be entirely unusable for the operations staff who interact with the same system fifty times a day. Both sets of requirements are valid. Neither is complete without the other.

How to use the answer: Once the real users are identified, seek direct access to them for at least part of the elicitation process. Observational techniques — watching users work with the current system before the new one is designed — are particularly effective at surfacing requirements that neither the stakeholder nor the user could have articulated in a conversation. Users often cannot describe what they need, but their behaviour in the current system reveals it clearly.

This question also surfaces assumptions about user technical capability. A solution that assumes high digital literacy from users who have low familiarity with the system will fail in adoption, regardless of how technically correct it is. Understanding who will actually use the solution is the foundation of usability requirements.

4. What Does Success Look Like for You?

What this question surfaces: each stakeholder’s individual definition of a successful outcome.

Different stakeholders define success in fundamentally different ways, even when they are part of the same project team working toward the same deliverable. For a finance stakeholder, success might mean accurate cost reporting to the cent. For an operations manager, it might mean reducing the time a transaction takes to process. For an executive sponsor, it might mean a dashboard that tells a clear performance story to the board in under two minutes.

If a Business Analyst does not ask this question explicitly and early, all three stakeholders will assume their definition of success is shared. Requirements will be documented, reviewed, and approved — and the delivered solution will technically meet the specification while still being experienced as a failure by at least one of them, because their actual expectation was never surfaced.

Making success measurable: follow this question with a probe for measurement. ‘How will you know, six months after go-live, whether this has been successful?’ forces the stakeholder to articulate a measurable outcome rather than a feeling. ‘It will be faster’ becomes ‘we will reduce processing time from four hours to thirty minutes.’ ‘It will be easier to use’ becomes ‘new team members will be able to complete the key workflow without assistance within their first week.’ Measurable success criteria become the foundation of acceptance criteria — without which UAT has no objective standard.

This question also reveals conflicts between stakeholders early, when they can be resolved through discussion rather than during delivery when the cost of misalignment is much higher.

Want to master requirements gathering from elicitation to documentation?

Techcanvass’s CBAP Certification Training covers the full BABOK v3 Elicitation and Collaboration knowledge area — including stakeholder interviews, requirements workshops, and requirements validation techniques.

5. Can You Walk Me Through How This Works Today?

What this question surfaces: the reality of the current process, including exceptions, workarounds, and pain points that only emerge when someone walks through it step by step.

There is a consistent and significant gap between how stakeholders describe a process in general terms and how that process actually operates in practice. When asked to describe their workflow, stakeholders naturally describe the normal, expected path — the way the process is supposed to work. They omit the exceptions, the manual workarounds, the unofficial steps that experienced users have added over time because the official process does not handle certain scenarios.

Asking someone to walk you through the process step by step, from the moment a transaction or request arrives to the moment it is complete, is one of the most powerful elicitation techniques available to a Business Analyst. As the stakeholder walks through each step, follow up with: ‘And what happens at that point?’, ‘Who does that?’, ‘How long does that typically take?’, ‘And what do you do if that information is not available?’

What this reveals that documentation cannot: process exceptions become visible. ‘We handle those manually in Excel because the system does not support them’ is a requirement hiding inside a workaround. ‘Usually someone calls the client at this point because the form does not capture that field’ is a gap in the current system that will follow the project into the new solution if it is not surfaced. ‘That step only happens in month-end runs’ is a timing constraint that will break the new system if it is not accounted for in the requirements.

This question is also the most effective way to build genuine understanding of the business domain. No amount of documentation review gives a Business Analyst the same depth of insight as watching and listening to a subject matter expert walk through their actual day-to-day work.

- Related: For a full overview of elicitation techniques including observation and prototyping, see our business analyst best practices guide

6. Where Does This Usually Break?

What this question surfaces: the known failure points, edge cases, and weak spots that are guaranteed to create problems in the new solution if they are not addressed in requirements.

Every process has weak points — places where things regularly go wrong, where exceptions accumulate, where manual intervention is required most often. These are precisely the areas that cause the most trouble in delivered solutions, and they are systematically underrepresented in requirements because stakeholders tend to describe how processes work when everything is normal.

Asking where things usually break is a direct invitation to describe abnormal scenarios. It signals to the stakeholder that you are not looking for the optimistic version of the process — you are looking for the realistic one, including the parts that are difficult or embarrassing to document. This changes the tone of the conversation in a way that surfaces information that would otherwise remain hidden until testing or production.

Common answers and what they mean for requirements: ‘It breaks when the data comes in a different format than expected’ is a data validation requirement. ‘It breaks when two people try to update the record at the same time’ is a concurrency control requirement. ‘It breaks when the client misses a step in the form’ is an error handling and user guidance requirement. ‘It breaks at peak volume at the end of the month’ is a performance and scalability requirement. Each of these answers is a requirement that would not have appeared on any formal requirements document without this question.

This question is particularly valuable in replacement or migration projects where the current system has known deficiencies. Understanding what breaks most often in the current system ensures the new solution is designed to resolve those specific failure modes — not just replicate the current system in a new technology.

7. What Decisions Will This Help You Make?

What this question surfaces: the business purpose behind data and reporting requirements — separating information that drives decisions from information that is merely interesting.

This question is especially important when requirements involve reports, dashboards, or analytics outputs. A significant proportion of reporting requirements are gathered based on what data is available rather than what decisions need to be supported. The result is reports that are technically accurate but practically unused — dashboards full of metrics that nobody acts on because they do not map to any actual decision the viewer needs to make.

By asking what decisions a report or dashboard will help the stakeholder make, you force the conversation from data to purpose. ‘I need to see sales by region’ becomes ‘I need to identify which regions are underperforming against target so I can allocate additional resource before the quarter ends.’ The second statement is a requirements brief. The first is a data request that could produce ten different reports, only one of which would actually be useful.

How this improves requirement quality for reports: once the decision is understood, the requirements can be designed backwards from it. What is the decision? Who makes it? When do they make it? What information do they need to make it confidently? What would a bad decision look like, and what information would prevent it? These follow-up questions produce requirements that are directly tied to business value — and produce reports that stakeholders actually use, rather than reports that look impressive but get checked once and abandoned.

This question also filters unnecessary information from scope. When a requirement cannot be connected to a specific decision that someone needs to make, it is a candidate for descoping — reducing delivery effort without reducing business value.

8. What Should Definitely Not Happen?

What this question surfaces: constraints, business rules, compliance requirements, and risk boundaries that stakeholders find easier to express as prohibitions than as specifications.

Some requirements are better articulated as exclusions than as inclusions. Stakeholders who struggle to describe what they want with precision can often describe what they do not want with absolute clarity. ‘Under no circumstances should a user be able to see another user’s account data’ is a security and data access requirement stated as a prohibition. ‘The system must never allow a transaction to proceed without a manager approval above a certain threshold’ is an authorisation requirement stated as a constraint.

These negative requirements — things that must not happen — frequently contain the most important business rules in the system. They often encode compliance requirements, legal constraints, data protection obligations, and risk management policies that the organisation has developed over years of experience with what goes wrong when they are not enforced.

Categories this question reliably surfaces: data access restrictions and privacy requirements; financial controls and approval thresholds; regulatory compliance constraints; system integration constraints that prevent certain data from crossing between systems; operational constraints around timing, sequencing, or user roles; and brand or communication standards that govern how the system presents information to external users.

This question is also useful for surfacing unstated assumptions about system behaviour. When a stakeholder says ‘the system should never send a duplicate notification’, they are revealing both a rule and an assumption — that notifications can sometimes be triggered multiple times — that might not have been visible anywhere else in the requirements.

9. What Happens If the Input Is Wrong or Missing?

What this question surfaces: error scenarios, validation requirements, and user guidance specifications that are almost never raised spontaneously by stakeholders.

The single most consistent source of requirement gaps in delivered software is incomplete coverage of error scenarios. Systems work well when everything goes according to plan — when the data is clean, the user follows the intended path, and all expected inputs arrive in the expected format. Problems appear when reality deviates from those assumptions, which it does constantly in production environments.

Most stakeholders, when describing requirements, describe the happy path — the scenario where everything works as intended. They do not naturally think about what should happen when a user submits a form with a required field blank, enters a date in an unexpected format, uploads a file in an unsupported type, or attempts to perform an action they are not authorised to perform. These scenarios are left undefined in the requirements and are then handled inconsistently — or incorrectly — by the development team.

What this question generates in requirements: field-level validation rules — what formats are acceptable and what should happen when the wrong format is entered; mandatory and optional field definitions — what happens when a required field is empty; error message specifications — what the user should see and be guided to do when their input is incorrect; system behaviour for partial data — can a record be saved in an incomplete state, and if so, what are the implications for downstream processing; and boundary condition requirements — what happens at the edges of acceptable values, not just in normal ranges.

These requirements are often labelled ‘edge cases’ and deprioritised. In practice, they are the requirements that determine whether a system is usable in production or requires constant support intervention because users regularly encounter unhandled error states.

10. Is There Anything We Are Assuming Here?

What this question surfaces: the silent assumptions that everyone in the room has accepted as true but that nobody has formally validated — and that will cause failures if they turn out to be wrong.

Every project operates on a layer of unspoken assumptions. ‘This data will always be available when we need it.’ ‘Users will follow the documented process.’ ‘The integration with the external system will work as described in their documentation.’ ‘The volume will remain within the current range.’ ‘The regulatory environment will not change before go-live.’ These assumptions are rarely documented. They are simply accepted as background conditions — until one of them turns out to be wrong.

When a hidden assumption fails in production, the result is a defect, a scope change, or a requirement that nobody anticipated. The root cause is almost always that the assumption was never surfaced and validated during requirements gathering. The question ‘is there anything we are assuming here?’ makes hidden assumptions visible at the point where they are cheapest to validate — before design and development have committed to a direction based on them.

How to run an assumptions session: at the end of any requirements discussion, ask this question explicitly. Then give the room time to answer it. Silence is common at first — not because there are no assumptions, but because surfacing them requires the stakeholder to think about what has been left unspoken. Prompt with category questions: ‘Are we assuming anything about the data quality? About user behaviour? About system availability? About regulatory requirements? About the volume of transactions?’ Each category typically surfaces at least one assumption that would otherwise remain invisible.

Document every assumption formally. Assign an owner to validate each one. If an assumption cannot be validated before requirements sign-off, it should be explicitly logged as a risk — with a plan for what happens to the requirements if the assumption turns out to be incorrect.

Quick Reference: What Each Question Surfaces

| Question | What It Surfaces | When to Ask It |

|---|---|---|

| What problem are we actually trying to solve? | The real business need behind the solution request — prevents building the right solution to the wrong problem. | First question in any requirements session before any solution discussion begins. |

| What happens today if we do nothing? | Urgency, business risk, and the true priority of the request. | During project scoping and requirements prioritisation. |

| Who is actually going to use this? | The real end user vs the stakeholder who commissioned the solution. | Early in elicitation before user requirements are defined. |

| What does success look like for you? | Each stakeholder’s individual definition of a successful outcome and measurable acceptance criteria. | At the start of elicitation — before requirements are detailed. |

| Can you walk me through how this works today? | Process exceptions, workarounds, hidden steps, and pain points that are invisible in general descriptions. | During process analysis — with a subject matter expert walking through their actual work. |

| Where does this usually break? | Known failure points, edge cases, and performance constraints that will repeat in the new solution if unaddressed. | After the current state walkthrough — specifically probing for abnormal scenarios. |

| What decisions will this help you make? | The business purpose behind data and reporting requirements — connecting metrics to actual decisions. | When gathering analytics, reporting, or dashboard requirements. |

| What should definitely not happen? | Constraints, compliance requirements, data access rules, and business rule boundaries. | After positive requirements are defined — explicitly probing for exclusions and constraints. |

| What happens if the input is wrong or missing? | Validation requirements, error handling specifications, and user guidance for exception scenarios. | During detailed functional requirements — for every data input and user action in scope. |

| Is there anything we are assuming here? | Silent project assumptions that will cause failures if they are wrong and have not been validated. | At the end of every requirements session and before requirements sign-off. |

Why These Questions Work: The Principle Behind Them

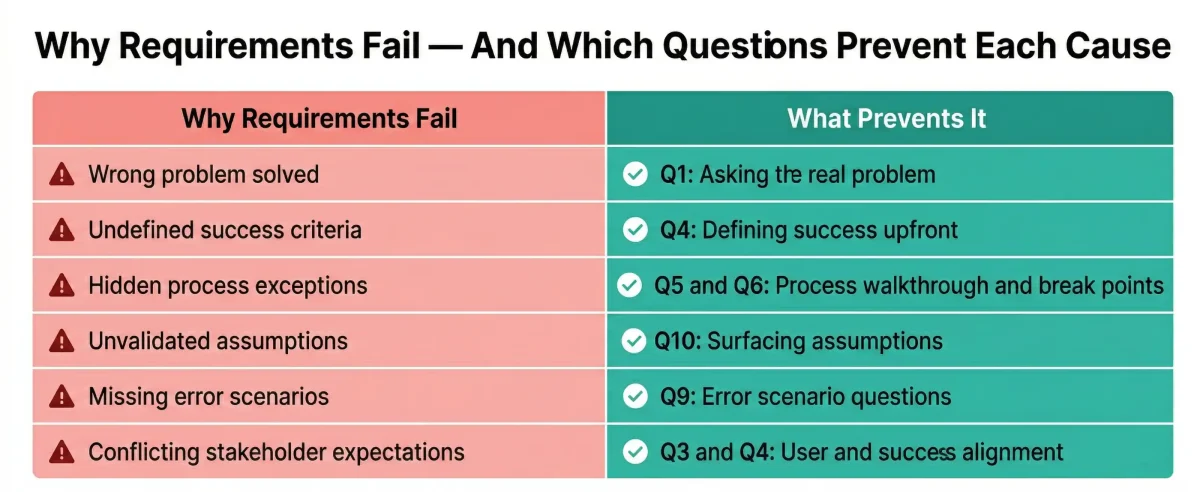

Each of these ten requirements gathering questions works for the same underlying reason: they slow the rush to solution and bring clarity to the problem. Individually, they seem simple. Together, they do something that formal requirements templates cannot do — they change the quality of the conversation that requirements are built from.

Requirements fail when important things are assumed, skipped, or left implicit. These questions make the important things explicit. They surface the business context, the real users, the failure modes, the constraints, the hidden assumptions, and the measurable definition of success — all before a single requirement is written.

The long-term shift: Business Analysts who consistently use these questions develop something more valuable than a reliable checklist. They develop a habit of mind — a way of listening to stakeholder conversations that automatically identifies what is missing, what is assumed, and what needs probing before the conversation moves on. When these questions become instinctive, the quality of requirements improves not because the documents are better structured, but because the conversations that produce them are better guided.

Good requirements do not come from writing better sentences. They come from having better conversations. These ten questions are the starting point for that shift.

- Related: For a structured approach to requirements elicitation as part of BABOK v3, see our CBAP certification requirements guide

Ready to take your requirements skills to the certification level?

Techcanvass is an IIBA-endorsed education provider. Our BA certification programmes cover the full BABOK v3 Elicitation and Collaboration knowledge area — with real project case studies and exam preparation.

Frequently Asked Questions: Requirements Gathering Questions

The most important questions surface what stakeholders cannot articulate spontaneously. These include: What problem are we actually trying to solve? (to avoid solving the wrong problem), What does success look like for you? (to define measurable acceptance criteria), Can you walk me through how this works today? (to surface process exceptions), Where does this usually break? (to uncover edge cases), and Is there anything we are assuming here?

They surface information that stakeholders don’t volunteer spontaneously, such as error scenarios, conflicting priorities, and hidden constraints. Structured questions force these elements into the conversation before design begin, resulting in requirements that require less rework during testing and produce solutions stakeholders truly value.

They should be asked at multiple points: problem definition and urgency at project kick-off; user and success definition before detailed work begins; process walkthroughs during elicitation; and assumption checks at the end of every session and before sign-off.

Elicitation techniques are the methods used (interviews, workshops, observation). Requirements gathering questions are the specific prompts used within those techniques to surface particular information. For example, a stakeholder interview is the technique, and “Where does this usually break?” is the question used inside it.

Hidden assumptions are major causes of project failure. By explicitly asking about them, a BA brings these conditions into the open where they can be validated. If an assumption is found to be uncertain, requirements can be adjusted before the design is committed to a false premise.

These questions map directly to the Elicitation and Collaboration knowledge area, specifically the ‘Conduct Elicitation’ task. The problem definition questions map to the BACCM concept of ‘Need’, while success questions map to requirements validation and acceptance criteria.

Treat the difficulty as important information. If they can’t define success, the business case may be weak. If they can’t walk through a process, arrange an observation session. If assumptions aren’t clear, run a structured assumption-surfacing exercise by category (data, user behaviour, systems).

Don’t ask all ten in every session. Ask the right questions at the right point. Focus on problem definition during kick-off, process details during elicitation, and assumptions during reviews. The best sessions feel like natural conversations, not interrogations.

In This Article