Quick Answer

What questions come up in a product manager interview?

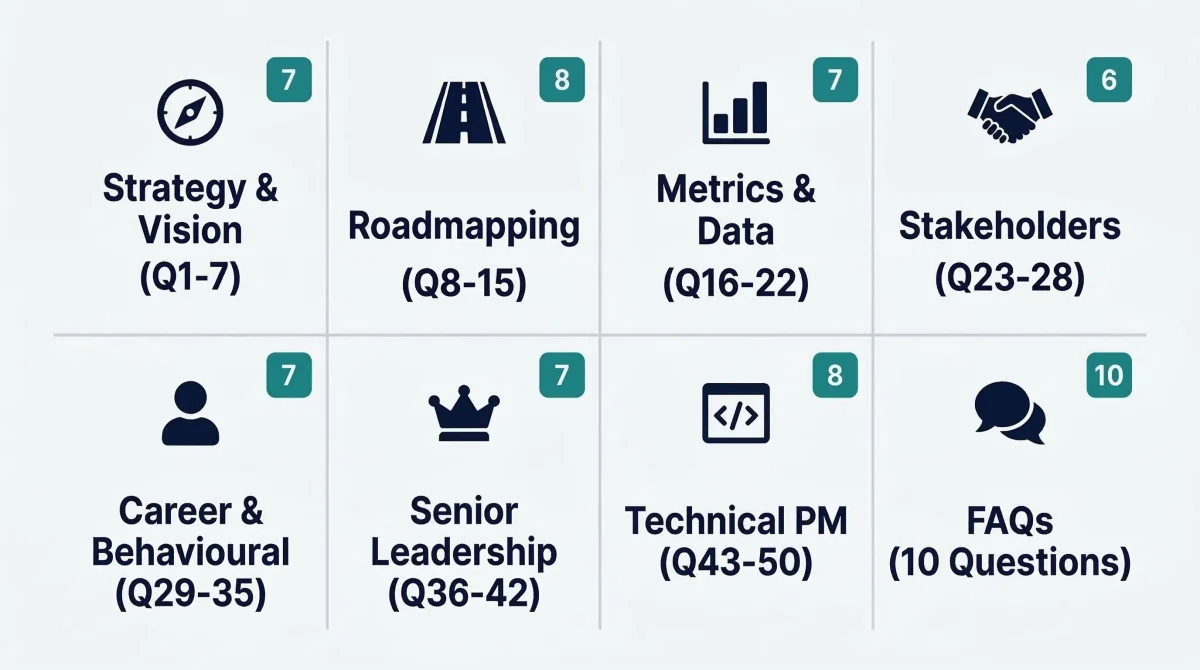

Product manager interviews typically cover five key areas: product strategy and vision, roadmapping and prioritisation, metrics and data analysis, stakeholder management, and behavioural leadership scenarios. Most interviewers also include a case study to test your problem-solving skills.

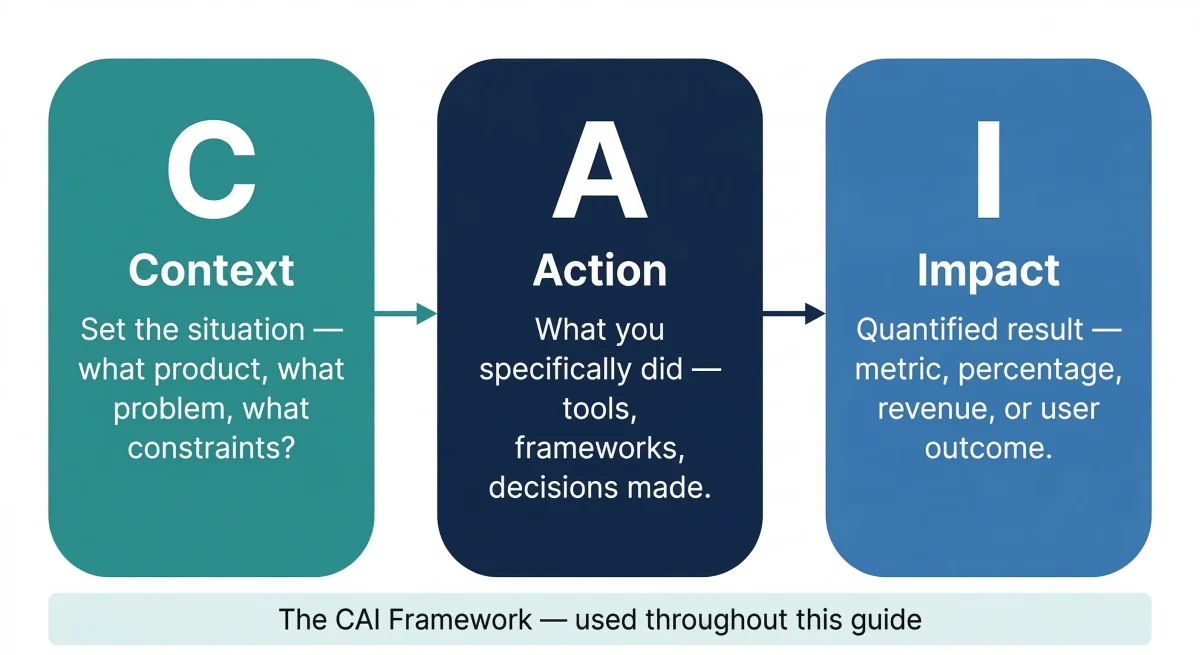

Best format for answers: Context (what was the situation) > Action (what you did) > Impact (measurable result). See the 50 questions and full-length answers below.

| Key Facts | Details |

|---|---|

| Questions in this guide | 50 product manager interview questions with full answers |

| Experience levels covered | Fresher / Associate, Mid-level, Senior, VP / Director |

| Frameworks referenced | RICE, MoSCoW, Kano, North Star, OKRs, JTBD, A/B Testing |

| Answer format used | Context, Action, Impact (CAI) — the format preferred by top companies |

| Best suited for | Candidates interviewing at SaaS, e-commerce, fintech, and healthtech companies |

| Last updated | March 2026 |

What Interviewers Actually Look For in a Product Manager

A product manager interview is not just a test of what you know. It is a test of how you think, how you communicate under pressure, and whether you can make trade-off decisions with incomplete information.

Senior interviewers at companies like Flipkart, Razorpay, Swiggy, or any FAANG company are looking for three things above all else:

- Structured thinking: Can you break a vague problem into components, make assumptions explicit, and reach a defensible conclusion?

- Data orientation: Do you reach for metrics naturally, or do you use gut feel without measurement?

- Business awareness: Do your product decisions connect back to revenue, retention, or strategic goals — or do they float in a vacuum?

This guide covers 50 of the most common product manager interview questions and answers, organised by category. Every answer follows the Context-Action-Impact (CAI) framework, which is the format that earns the highest scores in structured PM interviews. Context sets the situation, Action describes what you did, and Impact quantifies the outcome.

If you are transitioning from a Business Analyst background, you will find a dedicated section later in this guide that addresses how to frame your BA experience in PM interview language.

To sharpen the full range of PM skills — from product strategy to stakeholder communication — consider structured training through our Product Management Course before your interview rounds.

Strategy and Vision Questions (Q1–Q7)

1. How do you define a successful product?

A successful product delivers measurable value to users while meeting business objectives. Value and profitability must coexist — a product that users love but does not generate revenue or retain users is not sustainable.

Example (CAI): At a B2B SaaS company, our onboarding feature had low adoption. I defined success as first-week feature activation above 60%. We redesigned the onboarding flow using guided checklists and reduced time-to-first-value from 4 days to 1 day. Activation rose from 41% to 67% within two months.

2. How do you build a product roadmap?

I begin by anchoring the roadmap to company-level strategic goals, not a list of features. I then run discovery sessions with engineering, design, sales, and customer success to surface problems worth solving. Initiatives are grouped into themes, prioritised using RICE or Value vs. Effort scoring, and presented to leadership with quarterly milestones.

Example (CAI): At a logistics SaaS firm, the roadmap was fragmented across teams. I introduced quarterly OKR-linked themes — retention, enterprise expansion, and automation. Within one cycle, 80% of sprint work mapped directly to a roadmap theme, reducing context-switching and improving sprint velocity by 22%.

3. How do you prioritise features when resources are limited?

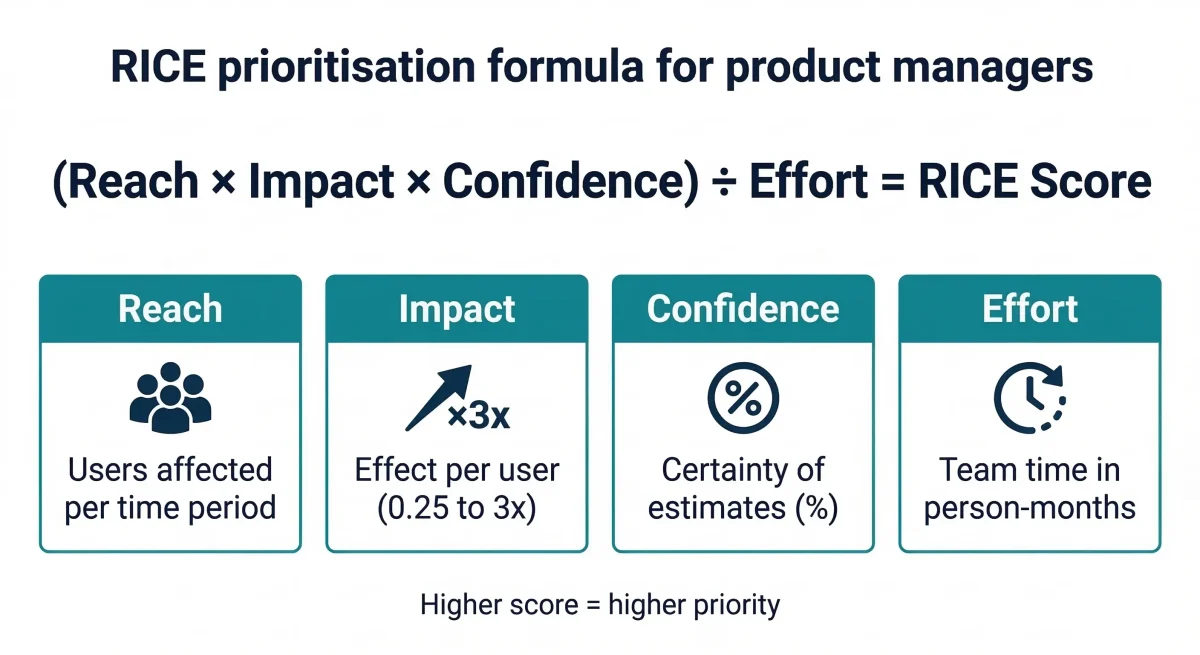

I use the RICE framework (Reach, Impact, Confidence, Effort) as the default scoring model. For sprint-level decisions I use MoSCoW (Must Have, Should Have, Could Have, Won’t Have). When there are competing stakeholder opinions, I add a customer-evidence layer — support ticket volume, NPS drivers, and sales-loss analysis — to make the case data-driven rather than opinion-based.

Example (CAI): A team of four engineers had twelve potential features queued. RICE scoring revealed that ‘bulk upload’ had 3x the reach and similar effort to a ‘UI redesign’ request from sales. We shipped bulk upload first. It reduced customer support tickets by 18% and was cited in three enterprise renewal conversations.

4. What frameworks do you use for product prioritisation?

The framework depends on the decision type. RICE works well for feature prioritisation when you have usage data. MoSCoW works for sprint planning under tight deadlines. Kano model is useful for distinguishing between basic expectations, performance features, and delight features. ICE (Impact, Confidence, Ease) is a faster version of RICE for early-stage teams.

Example (CAI): For a mobile payments app, we needed to decide between three features for a two-week sprint. I ran a Kano survey with 30 existing customers. The results showed that ‘split bill’ was a basic expectation (high dissatisfaction if absent) while ‘scheduled payments’ was a delight feature. We shipped split bill in the next sprint.

5. How do you define product vision?

Product vision is the long-term destination — a North Star statement that answers ‘what world are we trying to create for our users?’ It must be ambitious enough to guide decisions over 2-3 years but concrete enough that every team member can translate it into daily work.

Example (CAI): For a last-mile delivery product, the vision I helped define was ‘Make same-day delivery as reliable as a utility bill.’ This single statement resolved three roadmap debates: it justified investing in real-time tracking (visibility = reliability), deprioritised a features request for a loyalty programme, and aligned KPI choices toward delivery success rate over app downloads.

6. How do you validate a new product idea?

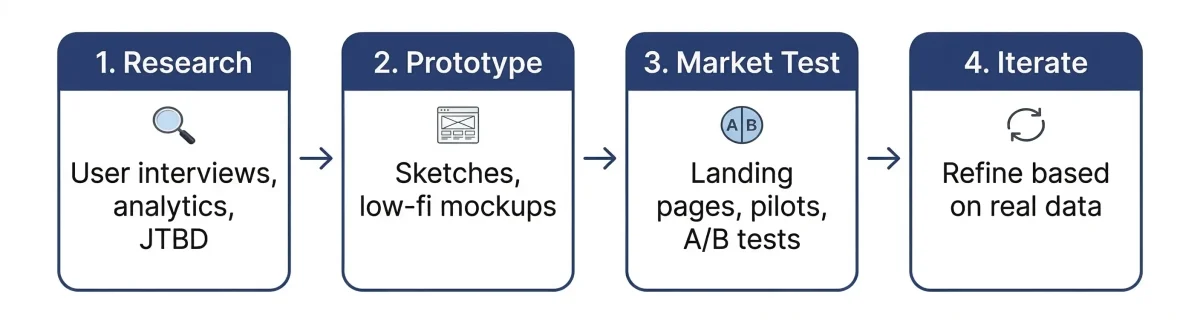

Validation should follow a phased approach: start with problem validation (do users have this problem?), then solution validation (do they want our solution?), then willingness-to-pay (will they pay for it?). Each phase should be progressively more expensive in terms of effort. I use user interviews and analytics review for phase one, prototypes and landing pages for phase two, and pilots or paid pre-orders for phase three.

Example (CAI): We explored adding a virtual consultation feature to a healthcare app. Before writing a single line of code, we built a landing page describing the feature and drove traffic with a small email campaign to our existing user base. 420 users signed up for a waitlist in 7 days, validating demand. We then ran 12 user interviews to validate willingness to pay before scoping the MVP.

7. How do you do market analysis?

Market analysis combines three lenses: competitive intelligence (what are others building and where are their gaps?), segment analysis (which user groups are underserved or growing fastest?), and trend analysis (which macro shifts create tailwinds for new solutions?). I use SimilarWeb for traffic benchmarking, Crunchbase for funding signals, and primary customer interviews for qualitative depth.

Example (CAI): While preparing the roadmap for a B2B analytics product, I ran a competitor analysis across six tools. I identified that no tool offered a ‘change impact simulator’ — a way to model how a process change would affect downstream metrics. We built that feature and it became the differentiating capability in three enterprise deal pitches.

Roadmapping and Prioritisation Questions (Q8–Q15)

8. What is your MVP strategy?

An MVP is the smallest set of features that tests your most critical assumption — not the smallest version of the full product. It should be designed to generate a specific learning, not just to ship something fast. I identify the core value hypothesis, list the assumptions that must be true for it to work, and build only what tests the highest-risk assumption.

Example (CAI): For a B2B feature request portal, the highest-risk assumption was ‘users will bother to submit structured requests rather than just emailing support.’ Rather than build a full portal, we created a Google Form and linked it from the support page. 73 structured requests came in over three weeks, validating the assumption before we built the real feature. This saved an estimated 8 weeks of development.

9. How do you manage a product backlog?

A healthy backlog is not a wish list — it is a curated, prioritised queue of validated problems and solutions. I run bi-weekly refinement sessions with engineering and design to review the top 20 items, clarify acceptance criteria, and remove stale items. I also maintain a separate ‘discovery backlog’ for unvalidated ideas that need research before they enter the delivery backlog.

Example (CAI): Inherited a backlog with 180 items, some from two years prior. I ran a ‘backlog bankruptcy’ session — archived everything older than 6 months with no recent customer evidence, which reduced the backlog to 45 items. Sprint focus improved immediately and the team spent less time in backlog grooming.

10. How do you set OKRs?

OKRs work best when they are set bottom-up with top-down alignment, not dictated from leadership. The objective should describe a qualitative destination. Key results should be outcome-based (not output-based) and measurable. I set 3 key results per objective and review them monthly, not just at the end of the quarter.

Example (CAI): Objective: Improve product activation. Key Results: (1) Increase percentage of users completing onboarding by 25%. (2) Reduce time-to-first-value from 4 days to 2 days. (3) Achieve NPS above 50 for first-time users. We achieved all three in Q2, which correlated with a 14% improvement in 90-day retention.

11. How do you control feature creep?

Feature creep happens when the cost of saying yes is invisible. I make it visible by requiring a ‘change impact doc’ for every new feature request that comes in mid-sprint. The doc must answer three questions: what user problem does this solve, what is the evidence that this problem is worth solving now, and what will we deprioritise to make room for this? This process alone reduces informal feature requests by around 60%.

Example (CAI): A sales team regularly brought mid-sprint feature requests from enterprise prospects. I introduced a one-page request template tied to our RICE scoring model. Of the 12 requests submitted in the next quarter, only 3 scored high enough to enter the roadmap. Sales initially pushed back but accepted the system when they saw it accelerated delivery of the features that did make the cut.

12. How do you balance short-term delivery with long-term vision?

I use a dual-track model: a tactical track for sprint-level delivery and a strategic track for quarterly initiatives. The tactical track handles bugs, retention improvements, and quick wins. The strategic track handles platform investments, new market initiatives, and foundational work. I protect the strategic track from being consumed by tactical demands by allocating a fixed percentage of engineering capacity to it — typically 25-30%.

Example (CAI): While delivering rapid UI improvements to reduce churn, we simultaneously ran a backend modernisation workstream that would enable multi-tenancy (a requirement for enterprise expansion). Without the ring-fenced capacity, the modernisation would have been delayed by eight months. It shipped on schedule and directly enabled landing our first enterprise client six months later.

13. How do you decide when to sunset a product?

Sunsetting is the right call when three conditions align: usage is declining and not recoverable through iteration, the cost of maintaining the product (engineering support, infrastructure, documentation) exceeds its revenue contribution, and the opportunity cost of keeping the team on maintenance is measurable. I also look at whether the product can be migrated or redirected rather than shut down completely.

14. What is a North Star Metric?

A North Star Metric is the single metric that best captures the core value your product delivers to users and that predicts long-term business health. It differs from a revenue metric because it is user-value focused. When the North Star is healthy, revenue typically follows. When it declines, it is an early warning signal.

Example (CAI): For a marketplace connecting freelancers and clients, revenue was the default metric but it lagged. I proposed ‘completed projects per month’ as the North Star because it captured value for both sides of the marketplace. When completed projects grew, repeat bookings grew 3-4 weeks later, and when it dropped, we identified a friction point in the review flow before it affected revenue.

15. How do you refine a product backlog?

Backlog refinement is most effective when it is continuous, not just a weekly ceremony. I use a three-tier backlog: items that are sprint-ready (fully defined, acceptance criteria written, estimated), items in refinement (understood but not yet sprint-ready), and items in discovery (not yet validated). I hold a 30-minute async pre-refinement review before each refinement session so engineers arrive with questions, not confusion.

Example (CAI): A team was losing 90 minutes per week in backlog grooming because items arrived underspecified. I introduced a Definition of Ready checklist: a user story, acceptance criteria, a design link, and a Jira estimate. Items that did not meet DoR could not enter the top-20 backlog. Meeting time dropped to 45 minutes and sprint commitment accuracy improved from 68% to 87%.

Metrics and Data Questions (Q16–Q22)

16. What metrics do you track for product success?

Metrics should be selected based on the product’s growth stage. For early-stage products, I focus on activation and retention. For growth-stage products, I add engagement depth (DAU/MAU ratio, feature adoption rate) and monetisation signals. For mature products, I track efficiency metrics like support ticket volume per active user and net revenue retention.

Example (CAI): For a B2C fintech app, I moved the team from tracking downloads (a vanity metric) to tracking ‘active transacting users’ (users who completed at least one transaction in the past 30 days). This shift revealed that 40% of installs were churning before a first transaction. We redesigned the post-install flow and reduced pre-transaction churn from 40% to 24% in six weeks.

17. How do you measure customer satisfaction?

I use a combination of NPS (Net Promoter Score) for relationship-level satisfaction, CSAT (Customer Satisfaction Score) for transactional satisfaction at specific touchpoints, and CES (Customer Effort Score) for friction measurement. NPS surveys go out quarterly, CSAT fires after key product actions (support resolution, onboarding completion), and CES is embedded in complex flows.

Example (CAI): Post-launch NPS dropped from 48 to 31 after a redesign. Rather than reverting, I ran CSAT surveys targeting users who had used both the old and new versions. The low score was concentrated in users over 45 who struggled with the new navigation. We added a simplified ‘classic mode’ toggle and NPS recovered to 54 within eight weeks.

18. How do you use data in product decisions?

Data should inform decisions, not make them. I treat quantitative data as the ‘what’ (what is happening) and qualitative data as the ‘why’ (why it is happening). Quantitative data from analytics tools reveals patterns at scale. Qualitative data from user interviews and session recordings provides the context to interpret those patterns correctly.

Example (CAI): Analytics showed a 30% drop-off at step 3 of our checkout flow. The quantitative data told us where the problem was, but not why. Six user interviews revealed that step 3 asked for a billing address, and many users had a different billing and shipping address but assumed the form did not support that. A small copy change (‘This can be different from your shipping address’) reduced drop-off by 19%.

19. How do you set up and interpret A/B tests?

A/B testing requires three things to be valid: a clearly defined hypothesis (‘If we change X, then Y will improve because Z’), a sufficient sample size calculated before the test runs (to avoid stopping early based on noise), and a single primary metric per test. I treat everything else as secondary metrics to watch, not to decide on.

Example (CAI): We hypothesised that changing the primary CTA from ‘Get Started’ to ‘Start Free Trial’ would improve sign-up rate because it sets clearer expectations. Sample size calculation required 2,000 visitors per variant for 80% power. After 8 days, the ‘Start Free Trial’ variant showed a 12% improvement in sign-up rate at 95% confidence. We shipped it.

20. What is the DAU/MAU ratio and why does it matter?

The DAU/MAU ratio (Daily Active Users divided by Monthly Active Users) measures stickiness — how often your users return. A ratio of 0.5 means users return, on average, 15 days per month. Messaging apps target above 0.6. Utility apps (tax filing, insurance) may naturally be below 0.1. The benchmark depends on the use-case frequency. What matters is whether the ratio is improving over time.

Example (CAI): Our productivity tool had a DAU/MAU ratio of 0.18, low for a tool users were supposed to use daily. I ran a cohort analysis and found that users who completed two specific actions in their first week (creating a template and sharing a document) had a DAU/MAU of 0.41. We redesigned onboarding to guide all new users to those two actions, and the overall ratio improved to 0.27 over three months.

21. How do you approach product discovery?

Product discovery answers the question ‘what should we build next?’ before the delivery team asks ‘how do we build it?’ I run discovery in parallel with delivery, using a separate discovery backlog. Discovery involves continuous user interviews (minimum 4-6 per month), data analysis from product analytics, and input from customer success and sales teams who hear user problems daily.

Example (CAI): While the delivery team shipped a backlog of known features, I ran a discovery sprint focused on users who had churned in the previous quarter. Exit interviews with 14 churned users revealed a common theme: they could not share reports with stakeholders who did not have a product login. A ‘viewer seat’ feature was added to the roadmap and reduced churn in that segment by 28% after launch.

22. How do you decide which problems to solve first?

I score problems on three dimensions: Impact (what is the potential upside if solved — revenue, retention, NPS?), Urgency (is this a growing problem or a stable one? Is a competitor about to solve it?), and Feasibility (can we solve it with current team and technology within a reasonable timeframe?). High-impact + high-urgency problems win, even if they are harder than low-feasibility ones.

Example (CAI): Our team had two problems competing for sprint priority: a checkout bug affecting 8% of transactions (medium urgency, high impact) and a UI refresh request from the marketing team (low urgency, medium impact). The checkout bug was causing a measurable revenue drain of approximately 1.2% of monthly revenue. We fixed the bug first, recovering 23% of the affected revenue within the first week.

Stakeholder Management Questions (Q23–Q28)

23. How do you handle conflicting stakeholder priorities?

Conflict between stakeholders usually signals a missing shared framework. My first step is to surface the conflict explicitly — holding a joint meeting rather than managing each party separately. Then I anchor the discussion to shared business goals rather than individual requests. Data is the most effective neutral arbiter: instead of arguing whose request is more important, I present what the data says about impact.

Example (CAI): Sales wanted extensive custom reporting for enterprise clients. Engineering argued it would create technical debt. I collected 6 months of support data showing that 70% of reporting requests came from 4 specific report types. I proposed building those 4 reports as configurable templates, satisfying 70% of the sales use case without the technical debt of a fully custom solution. Both teams accepted the compromise.

24. How do you collaborate with engineering?

The best PM-engineering relationships are built on three principles: transparency (share why, not just what), respect for technical complexity (ask about trade-offs, not just timelines), and joint ownership of outcomes (celebrate wins and analyse failures together). I involve engineers in discovery, not just delivery, so they understand the user problem deeply enough to propose better solutions than the ones I specify.

Example (CAI): On a team where PM-engineering trust was low, I started inviting two engineers to user research sessions on a rotating basis. Within two months, engineers were proactively suggesting solutions during backlog refinement that I would not have thought of. Sprint velocity improved and the number of solution re-dos due to missed requirements dropped by half.

25. How do you influence without authority?

Influence without authority works through three levers: data (objective evidence that removes opinion-based debate), empathy (understanding what the other person is trying to achieve and showing how your proposal helps them), and vision clarity (making the destination so compelling that people want to help reach it). I rarely push harder on a stakeholder who resists — instead I try to understand what is driving the resistance.

Example (CAI): A design team was reluctant to prioritise a redesign of the mobile checkout because they were already over-committed. Rather than escalating, I ran a quick session showing heatmap and session recording data where users were dropping off. When the designers saw the problem in their own medium (visual evidence), they offered to fit a targeted fix into their next sprint rather than a full redesign.

26. How do you ensure alignment across teams?

Alignment degrades fastest in high-velocity organisations where teams are shipping independently. I prevent misalignment by maintaining a shared, visible roadmap accessible to all teams, running cross-functional syncs every two weeks focused on dependencies and upcoming milestones, and writing weekly product updates that go to all stakeholders. Transparency is cheaper than realignment.

Example (CAI): After a product launch where support was blindsided by the new feature and received 200 tickets in the first week (all with known answers), I introduced a ‘launch readiness checklist’ that required sign-off from support, sales, and customer success before any feature could go live. The next three launches had near-zero ticket spikes.

27. What role does UX play in product success?

UX is not decoration — it is the mechanism through which your product strategy reaches the user. A technically excellent feature that is hard to find or confusing to use will not deliver its intended value. I treat UX as a product requirement, not a phase that comes after product definition. The best UX decisions are also the best product decisions.

Example (CAI): A data export feature had been live for six months with under 4% usage. UX audit revealed it was buried in the settings menu with a non-descriptive label (‘Data Tools’). We moved it to the main dashboard with a contextual prompt. Usage went from 4% to 31% without any change to the underlying feature.

28. How do you define your target audience?

I define target audiences through segmentation on three axes: demographics (who are they?), psychographics (what do they value, what are they anxious about, what do they aspire to?), and behavioural data (how do they actually use the product, and which behaviours predict retention?). I build user personas from interview data, validated against quantitative cohort analysis.

Example (CAI): For a fitness app, the default assumption was ‘health-conscious adults aged 25-40.’ Cohort analysis revealed that users who onboarded on weekday mornings (before 8 AM) had 3x the 90-day retention of weekend onboarders. Interviews confirmed these were urban professionals who exercise before work. We redesigned the entire onboarding flow and content recommendation engine around that persona and saw overall 30-day retention improve by 19%.

Career and Behavioural Questions (Q29–Q35)

29. Share a time you failed as a product manager.

Interviewers ask about failure to understand three things: your self-awareness, your recovery process, and whether you learned something generalisable. A good failure answer is specific (not vague), takes genuine ownership (without blaming the market or engineering), and ends with a concrete lesson you applied in a subsequent decision.

Example (CAI): I once launched a referral programme that I was confident would drive growth, based on benchmarks from two competitor case studies. I skipped A/B testing the incentive structure and went straight to full launch. Engagement was under 2% of users. Post-mortem revealed that our users primarily used the product in a professional context and were reluctant to refer colleagues to a tool they considered a competitive advantage. The lesson: user motivation for sharing is not transferable across contexts. I now run a motivation validation interview before any virality feature.

30. What is the difference between a product manager and a project manager?

A product manager owns the problem definition and the ‘what and why’ of the product. A project manager owns the execution plan and the ‘when and how’ of delivery. PMs are externally focused (market, users, business outcomes). Project managers are internally focused (timelines, resources, risk mitigation). In mature organisations, the best products emerge when both roles work in close collaboration.

Example (CAI): On a complex mobile platform migration, I defined the product requirements, user stories, and success metrics. The Project Manager created the dependency map, managed the sprint schedule across three teams, and flagged resource conflicts two weeks before they became blockers. The combination of roles delivered the project two sprints ahead of schedule. Neither of us could have achieved that independently.

31. What product management trends do you follow?

The most relevant current trends are: AI-augmented product development (LLMs embedded in product workflows, AI-driven personalisation), Product-Led Growth (PLG) as a go-to-market strategy, continuous discovery habits (replacing quarterly research with ongoing weekly user touchpoints), and the shift from feature roadmaps to outcome roadmaps that describe goals rather than deliverables.

Example (CAI): I introduced an AI-powered churn prediction dashboard to a SaaS product I managed. The model scored users on a risk index and triggered a customised retention campaign for high-risk segments two weeks before their subscription renewal. Churn in the flagged segment dropped by 31% in the first quarter after rollout, which represented a meaningful improvement in net revenue retention.

32. How do you approach product discovery?

Product discovery is the ongoing practice of answering ‘what problem is most worth solving, and is our proposed solution actually the right one?’ I treat discovery as a continuous activity — not a phase that happens before each project. I maintain a discovery backlog, run weekly user interviews, review support ticket themes monthly, and hold monthly sessions with sales and customer success to capture demand signals.

Example (CAI): We discovered an unmet need for ‘predictive alerts’ in a supply chain analytics app by systematically reviewing support tickets over three months. 23% of tickets were users manually running the same report every Monday morning to catch the same type of anomaly. We turned that manual process into an automated alert, reducing those tickets entirely and earning a five-star review from the client’s operations team.

33. Product Manager vs Business Analyst: How do you make the transition?

This question is especially relevant for readers coming from a Business Analyst background. The skills that overlap strongly: requirements gathering, stakeholder communication, process analysis, and documentation. The skills that need development: ownership of business outcomes (not just delivery of requirements), commercial and pricing awareness, and comfort with ambiguity in early-stage discovery.

The fastest path for a BA making the transition: (1) Start treating your current BA work as a PM would — tie every requirement to a business outcome. (2) Get exposure to product analytics tools (Amplitude, Mixpanel, or Google Analytics). (3) Learn at least one prioritisation framework (RICE or Kano) and apply it to your current project. (4) Seek roles with ‘Product Analyst’ or ‘Associate PM’ in the title as a bridge role.

Techcanvass offers structured training that bridges BA skills with PM methodology. See our Product Management Course for a practical curriculum designed for working professionals.

34. Why do you want to be a product manager?

Interviewers are checking for genuine motivation, not a rehearsed answer. The most compelling ‘why PM’ answers connect a specific problem you have experienced (as a user, as a BA, as an engineer) with the realization that the PM role is uniquely positioned to solve problems at scale. Avoid generic answers (‘I love technology and people’) and focus on a specific origin story.

Example (CAI): ‘As a Business Analyst at a healthcare company, I kept writing requirements for features that solved the stated problem but missed the underlying user need. I watched a well-specified feature launch and receive zero adoption because the users had worked around the problem in a different way while we were building. I became a PM to own the problem definition phase — not just the solution handoff. I wanted to be the person who goes back to the user before the sprint starts.’

35. How do you handle disagreement with your engineering lead?

Disagreements with engineering are almost always about trade-offs, not about who is right. The most productive approach is to make the trade-off explicit: ‘If we do X, we get A but sacrifice B. If we do Y, we get B but risk A.’ This frames the conversation as a joint decision about priorities rather than a test of authority. I also accept that engineers have context about technical risk that I do not, and I factor that in.

Example (CAI): My engineering lead recommended against a feature because of database scaling concerns. I did not override the technical judgment. Instead, I asked what level of scale would be needed to revisit the decision. The answer was 50,000 active users. I agreed to schedule the feature for the quarter after we crossed that threshold. We crossed it in month 5 and shipped the feature on schedule.

Senior PM and Leadership Interview Questions (Q36–Q42)

36. How do you build and lead a product team?

Building a product team starts with hiring for intellectual curiosity and communication ability over pure domain expertise — you can teach a smart person about a market, but you cannot teach someone to think rigorously or write clearly. I define clear ownership boundaries early (who owns which part of the product) and establish shared rituals: weekly product reviews, monthly retrospectives, and quarterly strategy reviews.

Example (CAI): After inheriting a team of two PMs with unclear responsibilities, I mapped the entire product into ownership domains and assigned each PM a domain with clear success metrics. Within one quarter, the PMs were running their domains independently and escalating only genuine cross-domain conflicts, freeing me to spend more time on strategy and external stakeholder management.

37. How do you develop a product strategy?

Product strategy answers ‘where are we going, and why is this the right place to go?’ It requires a clear diagnosis of the current situation (market position, user needs, competitive landscape), a set of guiding policies (what we will and will not do), and a coherent set of actions that flow from those policies. Strategy without diagnosis is just aspiration.

Example (CAI): A product I joined had no clear differentiation — it was competing on features with better-resourced competitors and losing. I ran a customer segmentation analysis and found that 30% of our highest-revenue customers were in a niche vertical we had never explicitly targeted. I proposed a vertical focus strategy, which included a dedicated product track for that vertical. Within 18 months, that segment represented 52% of ARR.

38. How do you manage a go-to-market strategy?

Go-to-market strategy bridges product and commercial teams. My role as PM in a GTM is to: define the target customer segment and their key pain points, articulate the unique value proposition clearly enough that sales and marketing can use it without me in the room, define pricing tiers aligned to value delivered, and create the internal enablement materials (product sheets, demo scripts, FAQ docs) that help sales close.

Example (CAI): For an AI-based analytics tool targeting SMBs, we defined a GTM strategy built around LinkedIn outreach and webinar-led education rather than paid ads (budget constraint). I created a 45-minute live demo script that marketing ran weekly. In the first month, 500 SMB sign-ups came through the webinar funnel, which was 3x the target.

39. How do you handle a major product failure post-launch?

Post-launch failures require immediate triage, transparent communication, and a rapid learning cycle. The first 24 hours are about damage containment: quantify the impact, notify affected stakeholders, and decide whether to roll back or patch forward. The first 48 hours are about root cause analysis. The first week is about the post-mortem and the plan to prevent recurrence.

Example (CAI): A feature release caused a 15% spike in support tickets within the first hour of launch. I initiated an incident call immediately, identified the issue (a change to date formatting that broke integrations for clients in non-US timezones), and coordinated a hotfix within 4 hours. The post-mortem revealed our QA environment did not test for timezone edge cases. We added a timezone test suite to our CI/CD pipeline.

40. How do you approach executive communication?

Executives need signal, not noise. They want to know: is the product on track to hit its targets, what are the top 3 risks, and what decisions do they need to make? I structure executive updates as a single-page summary covering the metric scorecard, key milestones hit or missed, top 3 risks with mitigations, and a single ‘decision needed from leadership’ section. I reserve detailed analysis for appendix documents.

Example (CAI): A monthly product review meeting had grown to 90 minutes with mixed engagement. I proposed switching to a 20-minute standing update using a standardised one-pager, with a separate 60-minute deep-dive session held quarterly. The standing update format was adopted across three product lines within two months because leadership found it significantly more actionable.

41. How do you prioritise at a portfolio level?

Portfolio-level prioritisation requires a different lens than feature-level prioritisation. I use strategic alignment scoring alongside RICE — a feature that scores highly on RICE but pulls the product away from its core strategic positioning should still be deprioritised. At portfolio level, I also consider the opportunity cost across product lines, not just within a single product.

Example (CAI): With three products competing for the same engineering resources, I introduced a portfolio prioritisation matrix that scored each initiative on strategic alignment, revenue impact, customer commitment (existing contracts requiring the feature), and effort. The matrix resolved a long-standing conflict between two product lines about shared engineering time.

42. How do you mentor junior PMs?

Mentoring junior PMs works best through structured debriefs on real decisions rather than theoretical frameworks. After any significant product decision — a prioritisation call, a stakeholder conflict resolution, a post-launch analysis — I run a 30-minute debrief with the junior PM asking three questions: what decision was made, what options were considered, and what would they have done differently? This builds decision pattern recognition faster than training courses.

Example (CAI): A junior PM on my team was struggling with stakeholder pushback on roadmap decisions. Rather than giving advice, I invited her to shadow three of my stakeholder conversations and then debrief afterward. Within six weeks she was handling her own stakeholder conversations independently and explicitly named the tactics she had picked up (‘leading with data’ and ‘naming the trade-off explicitly’) as techniques she had observed.

Technical Product Manager Interview Questions (Q43–Q50)

43. What technical skills do you need as a product manager?

The technical depth required varies by company and product type. Universal needs: enough SQL to run basic queries and validate analytics data yourself (rather than always depending on a data analyst), understanding of REST APIs and data models (so you can scope integration features accurately), and familiarity with your company’s tech stack at a conceptual level. For technical PM roles, also add: system design basics, understanding of infrastructure trade-offs, and experience reading and writing technical specifications.

Example (CAI): As a PM on a data platform product, I learned enough SQL to write my own cohort analysis queries. This meant I could validate product hypotheses in 10 minutes rather than waiting 2-3 days for a data analyst. It also increased my credibility with engineers significantly.

44. How do you write a technical PRD (Product Requirements Document)?

A good technical PRD answers five questions: What problem are we solving and for whom? What does success look like (measurable outcome)? What are the user stories and acceptance criteria? What are the edge cases, error states, and non-functional requirements? And what are the open questions that engineering needs to resolve before estimates? A PRD should make the build easier, not harder.

Example (CAI): I created a PRD template for my team that included a mandatory section called ‘What we are NOT building’ — listing explicitly excluded use cases. This single addition reduced mid-sprint scope changes by 35% because it forced clarity on constraints before the sprint began.

45. How do you work with data teams?

The most effective PM-data team relationship is built on shared metric definitions. If the PM and the data team define ‘active user’ differently, all analysis is inaccurate. I invest time early in every product initiative to co-create a ‘metrics glossary’ — a shared document that defines every metric used in the initiative, including how it is calculated, what data source it pulls from, and what the refresh frequency is.

Example (CAI): After discovering that the PM team and the data team had two different definitions of ‘conversion rate’ that diverged by 8 percentage points, I facilitated a metrics alignment session across both teams. The outcome was a company-wide metrics glossary published in Confluence. Three subsequent strategic decisions were made with higher confidence because everyone was working from the same numbers.

46. How do you evaluate build vs. buy vs. partner decisions?

The build vs. buy vs. partner decision is fundamentally a make-or-buy analysis with a strategic lens added. Build when the capability is a core differentiator and no external option exists at the quality needed. Buy (via off-the-shelf software) when the capability is commodity and integrating a vendor solution is faster and cheaper than building. Partner when the capability is strategic but your core competency is not in that domain.

Example (CAI): A product team was considering building an in-house video conferencing feature. I ran a build-vs-buy analysis: build estimate was 6 months and 3 engineers. A vendor API (Twilio Video) would take 3 weeks to integrate. Since video quality was not a differentiator for our product (it was a supporting feature), we integrated the vendor solution and redirected the saved engineering capacity to a core differentiator — the AI-powered recommendation engine.

47. How do you manage API products?

API products require a different user empathy model — your users are developers, not end-users. Developer experience (DX) is the equivalent of UX for API products. Key DX principles: clear, consistent documentation (auto-generated API docs are rarely sufficient), predictable versioning with long deprecation windows, sandbox environments that closely mirror production, and fast resolution of developer support tickets.

Example (CAI): After a major API version upgrade, partner developers complained about the migration being undocumented. I introduced a ‘migration guide’ as a mandatory deliverable for every API version change, written by the PM rather than delegated to engineering. Developer support tickets per API version dropped by 60% on the next release.

48. How do you approach AI product management?

AI product management adds specific challenges not present in conventional software: model performance is probabilistic (not deterministic), bias and fairness are product requirements (not just ethical considerations), and user trust is fragile (one surprising AI output can destroy weeks of user confidence). I add ‘AI behaviour specifications’ to PRDs: defining what the model should do, what it should not do, and how errors should be presented to users.

Example (CAI): For an AI-based resume screening tool, early user testing revealed that hiring managers did not trust rejections made by the AI without explanation. I added an ‘explanation card’ to every AI recommendation — a plain-language summary of the top 3 factors in the decision. Trust scores in user testing improved from 38% to 71%, and the product achieved its adoption targets ahead of schedule.

49. How do you measure the success of an AI feature?

AI feature metrics operate at two levels: model metrics and product metrics. Model metrics (accuracy, precision, recall, F1 score) tell you if the model is performing well technically. Product metrics (task completion rate, time-saved per task, user adoption of AI recommendations) tell you if the model’s performance is translating into user value. I track both and ensure model improvements are measured against product outcomes, not just technical benchmarks.

Example (CAI): A content recommendation algorithm had strong recall (users saw relevant content) but low product adoption — users were not clicking the recommendations. Investigation revealed the recommendations were accurate but appeared at a point in the user journey where the user was not in ‘discovery mode.’ Moving the recommendation surface to the post-action state (after a user completed a task) increased recommendation clicks by 3.4x with no change to the underlying model.

50. What product management trends will shape the next 2-3 years?

Three trends will significantly reshape PM work in the near term: (1) AI co-pilots in product development tools — PMs will increasingly use LLM-based tools to accelerate user research synthesis, PRD drafting, and competitive analysis. The skill shift is from execution to curation. (2) Product-Led Growth becoming the default GTM for B2B SaaS — freemium, in-product trials, and usage-based pricing are replacing enterprise sales-led models. (3) Outcome roadmaps replacing feature roadmaps as boards and investors demand accountability for business outcomes, not just shipping.

Example (CAI): I used an LLM to synthesise 120 user interview transcripts into a structured affinity map in 4 hours — a process that previously took a research team two weeks. The time saved was reinvested in more user interviews, improving the quality of our discovery sprint output. PMs who learn to use AI as a research and synthesis tool will have a significant productivity advantage.

Product Manager Salary in India and the United States (2025-2026)

Understanding PM compensation helps you evaluate offers, negotiate confidently, and benchmark your market value. The figures below are based on aggregated data from LinkedIn Salary, Glassdoor, and AmbitionBox as of early 2026.

India (Approximate INR per annum, Base Salary):

| Level | Years of Experience | Base Salary Range (INR) | Top Percentile (INR) | Common Employers |

|---|---|---|---|---|

| Associate / Junior PM | 0-2 years | 6-12 LPA | 15 LPA | Startups, D2C brands, IT services firms |

| Product Manager (Mid) | 2-5 years | 15-30 LPA | 40 LPA | SaaS, fintech, e-commerce (Flipkart, Razorpay, etc.) |

| Senior Product Manager | 5-8 years | 30-50 LPA | 70 LPA | FAANG India, Series B-D startups |

| Group PM / Principal PM | 8-12 years | 50-90 LPA | 1.2 Cr+ | Unicorns, public tech companies |

| Director of Product / VP | 12+ years | 90 LPA – 2 Cr+ | 3 Cr+ | Large enterprise tech, IPO-stage startups |

United States (Approximate USD per year, Base Salary):

| Level | Years of Experience | Base Salary Range (USD) | Total Comp with Equity (USD) | Common Employers |

|---|---|---|---|---|

| Associate PM / APM | 0-2 years | $80K-$120K | $100K-$160K | Mid-sized tech companies, APM programmes |

| Product Manager | 2-5 years | $120K-$160K | $150K-$220K | SaaS, fintech, healthtech |

| Senior Product Manager | 5-8 years | $160K-$200K | $220K-$350K | Mid to large tech companies |

| Principal / Group PM | 8-12 years | $200K-$250K | $300K-$500K | FAANG, public tech companies |

| Director / VP of Product | 12+ years | $220K-$320K | $500K-$1M+ | FAANG, unicorns, public companies |

INR figures are approximate and vary significantly based on company stage, funding, and city. Bengaluru and NCR typically pay 10-20% above national average for PM roles.

Source: Glassdoor, AmbitionBox 2025-26 data.Product Manager vs Business Analyst: Key Differences

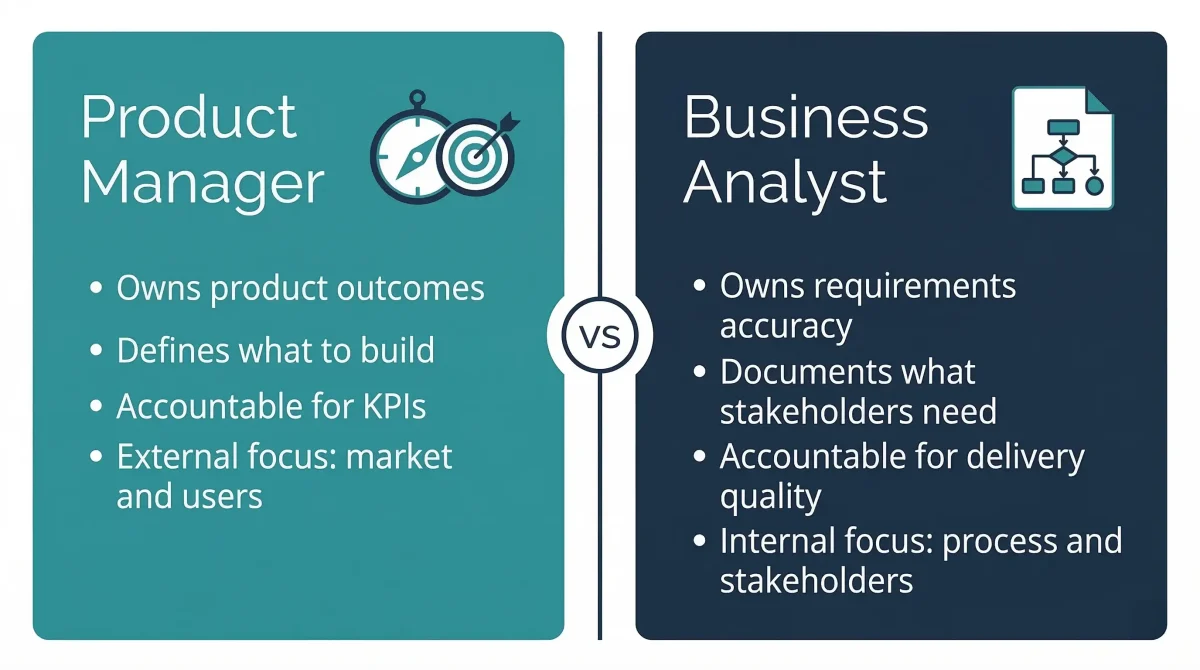

For professionals working in Business Analysis who are exploring a move into product management, understanding the key differences between the two roles is the first step. The roles overlap significantly in practice, but differ in ownership, focus, and accountability.

| Dimension | Product Manager | Business Analyst |

|---|---|---|

| Primary Accountability | Product outcomes (revenue, retention, adoption) | Requirements accuracy and stakeholder communication |

| Decision Authority | Owns the ‘what and why’ of the product | Informs and documents decisions made by others |

| User Research | Owns user discovery and problem definition | Supports requirements gathering from stakeholders |

| Business Outcomes | Directly accountable for product P&L and KPIs | Indirectly accountable through delivery quality |

| Roadmap Ownership | Owns and communicates the product roadmap | Typically does not own the roadmap |

| Technical Involvement | Defines acceptance criteria; not expected to code | Writes detailed functional specs; closer to technical detail |

| Typical Next Role | Senior PM, Group PM, Director of Product | Senior BA, Product Owner, Business Architect |

| Transferable Skills | Stakeholder management, documentation, process analysis | All BA skills are strong foundations for PM work |

If you are a Business Analyst looking to build the skills needed to make this transition, Techcanvass offers structured training programmes designed for working professionals at this exact inflection point. Not sure if you are ready for the PM move yet? Start with our Business Analyst career guide to assess where you stand.

Frequently Asked Questions: Product Manager Interviews

The most effective preparation combines framework study, practice with real case questions, and storytelling preparation. Start by learning two or three prioritisation frameworks well (RICE and MoSCoW are the most commonly tested) and one product discovery framework (Jobs to Be Done or Continuous Discovery Habits).

Then prepare a ‘story bank’ of 8 to 10 real experiences from your career that demonstrate outcomes, not just activities. Each story should include a specific metric or result. Practice answering questions out loud, not in writing — the verbal delivery of structured answers is a separate skill from the ability to write them. Use the Context-Action-Impact format for every experience-based answer.

Dedicate time to company-specific preparation: review the company’s recent product announcements, understand their current pricing model, and come prepared with at least one improvement idea for their product that is grounded in user feedback you have personally observed.

A typical PM interview process at a mid-sized to large tech company involves four to six rounds. The first round is usually a recruiter screen focused on background, motivation, and salary expectations. The second round is often a hiring manager screen covering career history and basic product thinking.

Rounds three and four typically involve case study exercises, one focused on product design or strategy and one on analytical or metrics reasoning. Round five is often a cross-functional panel interview where you meet with engineering, design, or marketing stakeholders. FAANG companies may add additional rounds including a written take-home case study or an executive presentation.

The three most common mistakes are: jumping to solutions before defining the problem, using vague answers without metrics or evidence (‘I improved the user experience’ rather than ‘I reduced cart abandonment from 52% to 38%’), and failing to ask clarifying questions at the start of a case study.

Additional mistakes include not acknowledging trade-offs in prioritisation, over-engineering frameworks instead of focusing on substance, and not having prepared questions for the interviewer at the end of the round.

RICE stands for Reach, Impact, Confidence, and Effort. It is a scoring model used to prioritise initiatives when multiple options compete for limited resources. The score is calculated as (Reach x Impact x Confidence) / Effort. Higher scores indicate higher priority.

In an interview, use RICE to demonstrate data-driven thinking when asked to prioritise features. Always acknowledge its limitations: it’s only as good as your input estimates and works better for features with similar scope rather than strategic directional shifts.

The standard framework has five steps: clarify goals and constraints, identify and segment target users, define the top 2-3 user problems (JTBD lens), propose solutions with prioritisation, and define success metrics.

A strong answer takes 15 to 20 minutes. Spend at least 3 minutes on user definition before proposing features. If unsure about a user type, state your assumption and ask the interviewer for alignment.

A Product Manager is externally focused: responsible for market research, discovery, strategy, and roadmap definition. A Product Owner is internally focused: responsible for backlog management, user stories, and serving as the primary interface for the development team in Agile processes.

In large organisations, these are distinct roles; in startups, one person typically fills both. Explaining this distinction shows maturity in understanding organisational structures.

Choose a product you use deeply. Structure your answer around three dimensions: the user problem it solves elegantly, specific product decisions that show great thinking, and one specific improvement you would make as its PM.

The improvement section is crucial—it shows critical thinking and the mindset that no product is ever perfect.

PLG is a strategy where the product itself drives acquisition, conversion, and expansion (e.g., Slack, Notion). In interviews, PLG comes up in strategic questions about onboarding, freemium metrics, and viral growth features.

Know these terms: time-to-value, activation rate, expansion revenue, and viral coefficient to demonstrate strategic sophistication.

For warm-up questions, aim for 90-120 seconds. For experience-based (CAI format) questions, aim for 2-3 minutes. Case study questions are conversations that can run 15-25 minutes.

Avoid being too brief (no evidence) or too long (signals poor communication). Aim for crisp delivery with specific details.

It is worth it if it fills a specific gap, like transitioning from Business Analysis or Engineering. It provides the frameworks, vocabulary, and projects needed to compete. For experienced PMs, measurable outcomes carry more weight than credentials.

For BAs specifically, a bridging programme is the fastest way to build competency compared to a generic PM course.

In This Article